When setting performance measurements NHS Trusts are often sidetracked by four big myths

In the final lesson of this six-part series, I look at the importance of measurement and interpreting data to create an ongoing culture of improvement. It draws on my evaluation of the UK National Health Service’s (NHS) five-year long partnership with the Virginia Mason Institute (VMI), of the US, to illustrate in a practical way, the components of an improvement infrastructure relevant to every organisation in healthcare.

Each lesson is followed by a ‘tweetchat’ on Twitter hosted by WBS in partnership with the #QIhour network. The lessons are summarised in Six key lessons from the NHS and Virginia Mason Institute partnership.

Those of us interested in quality improvement understand the fundamental importance of measurement: without measurement, you can’t know if you’ve improved.

And the good news is, health systems like the NHS are good at performance measurement, right? Well maybe, maybe not.

Historically, the NHS has been exceptionally good at collecting data, but not so good at interpreting it, or using data in a meaningful way. But data is important, without it we can’t identify the performance gaps we seek to bridge, or evidence changes in performance aligned to our improvement endeavour.

The rule of the golden thread

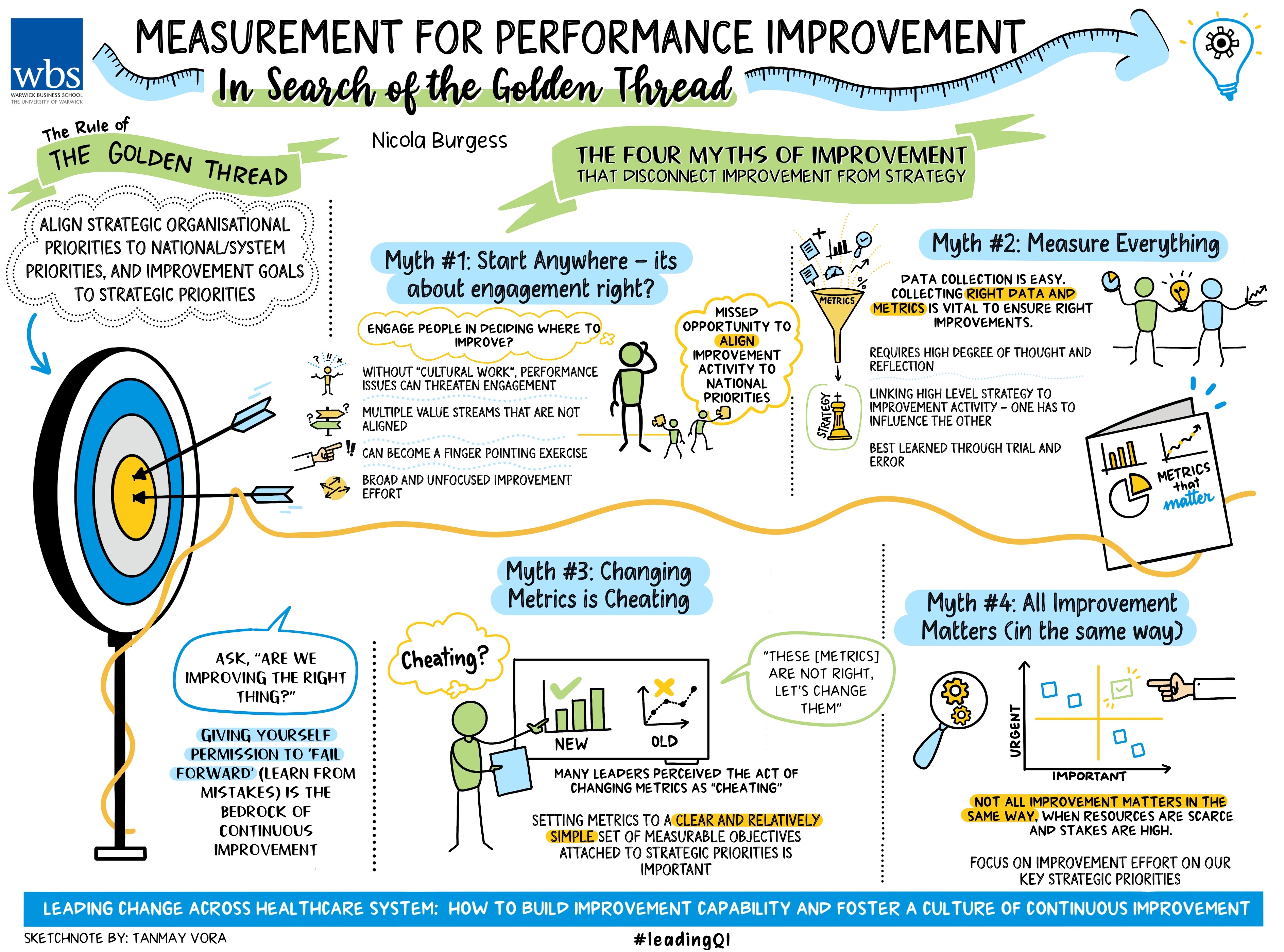

When designing performance metrics, we should follow the rule of the golden thread: align strategic organisational priorities to NHS national priorities, and improvement goals to strategic priorities. Failure to align improvement activity to strategic priorities will at best result in pockets of operational excellence, but at worst result in improvement activity directed in areas that are not a priority, thus deflecting energy and resource away from areas that require more urgent attention.

But connecting the dots between improvement activity and higher-level metrics is tricky for several reasons. Our evaluation of the NHS-VMI partnership uncovered four myths of measurement for improvement that serve to disconnect improvement activity from strategic goals and blur the path for improved organisational performance.

Myth 1 Start anywhere – it’s about engagement, right?

Engaging as many people as possible in improvement is critical for developing and embedding a continuous improvement culture.

But we must start somewhere. Organisations don’t shift culture overnight. People are naturally wary of change (particularly those that have been there and done it before) and developing improvement skills and experience takes time. So where should we start and why? Aim big or start small? Who should we engage and how will we convince them to lead change? Important questions.

If we start small, we build capability and show that improvement is possible, but will anybody notice or care? Will patients truly benefit? And will it help us build a business case for improvement or entice others to get involved? Or might we just be investing energy and resources into improvement activity that doesn’t directly align to our strategic goals.

If we start big then everyone will know this organisation is committed to change using the improvement method. But does it feel like top-down imposition to the clinical workforce? What if we fail? Do we risk the programme's credibility, adding volume and veracity to the voice of those known to resist the application of improvement methods and practices?

What if we just ask our people where they think we should focus attention? This was the decision taken by two of the five hospital trusts that were part of the NHS-VMI partnership. Incidentally, these two organisations had not undertaken ‘cultural work’ prior to the partnership (the concept of cultural work is explained in lesson one) and both were grappling with culture and performance issues that threatened engagement.

The rationale was sound: to engage people in change, let them decide where change efforts should focus. So, the executive leadership team asked the organisation: where should we focus? One of the organisations voted overwhelmingly to start with what was widely perceived to be the biggest problem: the accident and emergency department (A&E). The other organisation focused on multiple value streams, all of which were important but sadly none of them aligned to strategic goals.

The first organisation chose a department (A&E) that was too big and too complex. Problems in A&E are rarely isolated to the department; further those working in A&E don’t often appreciate being labelled ‘the problem’.

As one of our interview respondents told us: “It became a massive finger pointing exercise.”

The improvement attempt was subsequently too broad and unfocused, sadly it didn’t work.

For the second organisation, lots of value streams were initiated at pace and lots of improvement workshops took place. A seemingly good start but over time it was clear there was too much going on, lots of improvement activity, lots of innovation, sadly not much of it aligned to strategic goals.

As another respondent said: “It was almost like, well it’s your turn to choose - what do you want to do?

“We’ve got burning platforms out there and we should have been focusing on those… and we should have considered other data sources when we were thinking about the value streams.”

In both cases, three years after the NHS-VMI partnership began, the organisations were classified as being in ‘special measures’ by the Care Quality Commission - the independent regulator. While we can’t proclaim that the outcome for these organisations would have been different had they chosen value streams more wisely, we might conclude that both hospitals missed an opportunity to align improvement activity to national priorities.

To quote another of our interview respondents: “The improvement programme absolutely should allow us to achieve constitutional standards. It should allow us to achieve financial performance. So, there’s a synergy there. But we are absolutely not sitting in a world outside the other.”

Myth 2 Measure everything

This makes total sense. Measure everything, where could we possibly go wrong?

But while data collection is relatively easy, it only gives a snapshot of information at a snapshot of time (collecting data over time is of course, far more insightful).

Furthermore, NHS organisations collect and report lots of data; the adage ‘what gets measured gets managed’ is something of a truism. Hence, setting the right metrics and collecting the right data is vital to ensure we are improving the right things. But deciding the right metrics and measures requires a high degree of thought and reflection, starting with the high-level strategic goals, explicitly linking these to the improvement activity, and checking that one can and does influence the other.

It sounds simple but deciding which metrics to select to best evidence change can be challenging and is perhaps best learned through trial and error. As one senior leader said: “Originally we set high-level metrics and I suppose one of the things I would reflect on with VMI is that they knew we were setting these high-level metrics, they knew we were never going to deliver them… Now originally I was a bit frustrated about that because I thought you could have saved me a lot of time by telling me we were never going to meet them, but actually I now recognise that the most powerful thing has been to learn about how you have to go back and set those metrics right in the first place and that requires a deeper understanding and more patience than what we had at the time.”

Myth 3 Changing metrics is cheating

We were really surprised to learn that senior leaders were resistant to changing metrics, despite frequently scratching their heads in dismay when all the great improvement work that was happening in their organisations, could not be evidenced by their high-level metrics. Surely something was amiss.

We frequently heard senior leaders lamenting “we can’t seem to move the dial on our high-level metrics”, while also voicing fears that not moving the dial was affecting morale.

Digging deeper, we began to realise that many leaders perceived the act of changing metrics as ‘cheating’. This is quite different from the general malady of ‘gaming’, where managers develop workarounds that allow them to meet a prescribed performance standard, but in ways that fail to deliver the intended benefit for the service user (commonly referred to as ‘hitting the target, missing the point’).

For at least four of the five hospital trusts, it wasn’t until years four and five of the NHS-VMI partnership that they realised the importance of setting metrics to a clear and relatively simple set of measurable objectives attached to strategic priorities. One said: “We gave ourselves permission to go, these [metrics] are not right, let’s change them.”

Myth 4 All improvement matters (in the same way)

At the heart of the first three myths sits an uncomfortable truth: not all improvement matters (in the same way).

This is a somewhat counter-intuitive point. In healthcare especially, resource is scarce and the stakes are high, therefore we must be sure to focus improvement effort on our key strategic priorities (that in turn align to national and constitutional priorities).

While improvement does require a certain ‘belief’ that we are doing the right thing, we must also be mindful that for highly regulated organisations at least, not all improvement matters in the same way.

Permission to ‘fail forward’

I recently attended a Lean Healthcare conference in Chicago hosted by Catalysis and presented my research at the Lean Healthcare Summit hosted by the Center for Lean Healthcare Engagement and Research (CLEAR).

One of the questions repeatedly asked was: “Are we improving the right thing?” In other words, don’t forget to check.

Giving yourself permission to ‘fail forward’ - ie learning from mistakes - forms the bedrock of continuous improvement.

Further reading:

Burgess, N., Currie, G., Crump, B. and Dawson, A. 2022. Leading change across a healthcare system : how to build improvement capability and foster a culture of continuous improvement : lessons from an evaluation of the NHS-VMI partnership.

The final tweetchat will be held on Tuesday July 11, 7-9pm, GMT. Follow the hashtags #LeadingQI and #QIhour to join and learn more from the NHS-VMI Partnership.

Nicola Burgess is Reader of Operations Management and teaches Operational Management on the Distance Learning MBA plus Digital Innovation in the Healthcare Industry on the Executive MBA.

Follow Nicola Burgess on Twitter @DrNicolaBurgess.

More lessons from the Virginia Mason Institute – NHS Partnership:

1 Build cultural readiness as the foundation for better QI outcomes

2 Embed QI routines and practices into everyday practice

3 Leaders show the way and light the path for others

4 Relationships aren’t a priority, they’re a prerequisite

5 Holding each other to account for behaviours, not just outcomes

6 The rule of the golden thread: not all improvement matters in the same way

For more articles on Healthcare and Wellbeing sign up to Core Insights here.

X

X Facebook

Facebook LinkedIn

LinkedIn YouTube

YouTube Instagram

Instagram Tiktok

Tiktok